The hard parts of coding agents aren’t the model calls.

They’re everything around the agent: review loops, artifacts, comments, isolation, and getting the output back into normal engineering workflows.

I realized this while building something in roughly this shape recently. I wasn’t trying to invent a new orchestration layer. I just wanted to rebuild the pattern and push it until the rough edges became obvious.

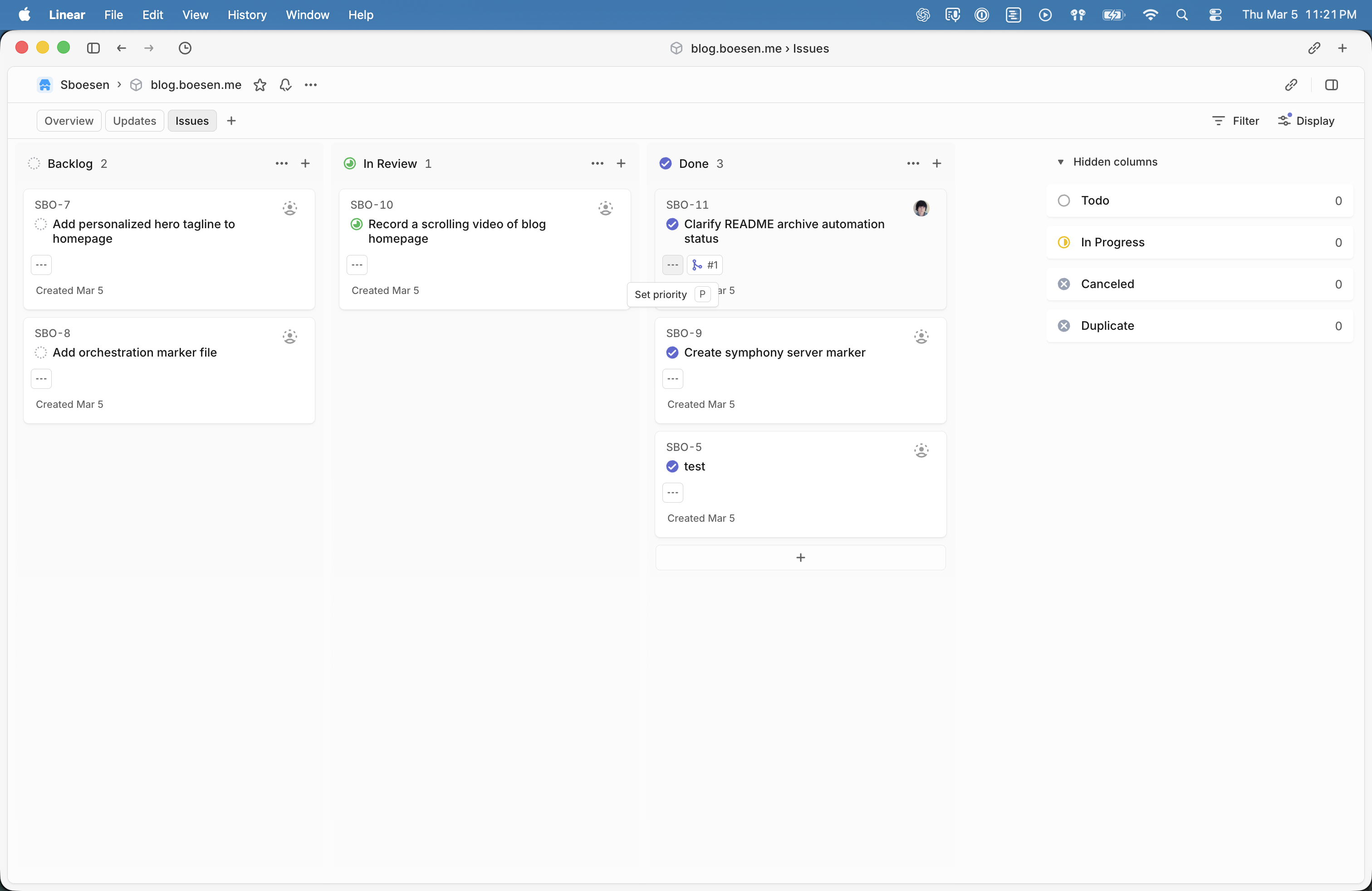

Around the same time, OpenAI released Symphony.

At first glance it looks like what you’d expect from an agent orchestration project: agents watch a board of work, pick up issues, run tasks in isolated environments, produce artifacts, and hand the results back for review.

But the part that caught my attention wasn’t the workflow. It was how OpenAI suggests you try it.

The README doesn’t start with “clone this repo and run the demo.” The first option is essentially: implement Symphony yourself from the Markdown spec. The reference implementation comes second.

That’s a little wild.

Shipping a spec isn’t new, but it’s rare to see it positioned this directly. In this case the spec is clearly treated as the real product, and the code is just one example of how you might realize it.

Review loops are the real product

The first version I built followed the classic “agent takes an issue and opens a PR” approach. It technically worked: the agent read the issue, generated a change, pushed a branch, and opened a pull request. But the workflow felt wrong almost immediately.

Real engineering work is rarely a one-shot operation. Someone reviews the change, leaves comments, asks questions, or requests modifications. The author updates the code, pushes a new revision, and the process repeats until it converges.

Agents need to participate in that loop. They have to read review comments, update artifacts, respond in threads, and push revisions. Without that capability the pull request becomes a dead end where humans have to take over.

Once the agent could actually react to review feedback, the system started to feel much more like a collaborator instead of a code generator. And once the agent becomes part of that workflow, another piece starts to matter more than you might expect.

Comments become a control surface

Another thing that emerged naturally was the importance of comments. At first they look like a simple output channel - somewhere the agent can post logs or explanations. But they quickly turn into something more interesting.

Engineers can leave a comment asking the agent to clarify an assumption, rerun something, or explore a different direction. The agent reads the thread and adjusts its behavior accordingly.

It’s not a full conversational interface, but it’s enough to steer the work without leaving the issue tracker. Once comments started working this way, the next thing that became obvious was how important the outputs themselves were.

Artifacts matter more than logs

Early versions of the system produced a lot of logs. The agent would explain what commands it ran, what decisions it made, and how it interpreted the task. That’s useful for debugging, but it’s not what engineers actually review.

What people care about are artifacts: generated code, test results, screenshots, reports, or other outputs that represent the result of the work. Logs explain the process. Artifacts let you evaluate the outcome.

Once artifacts became first-class objects in the system, everything made more sense. But artifacts introduce another problem: execution environments.

Isolation is not optional

Running agents in a shared environment gets messy quickly. Dependencies conflict, state leaks between tasks, and reproducing results becomes painful. As soon as multiple issues run at the same time they start stepping on each other.

The fix is simple: every task needs its own workspace - separate directories, isolated dependencies, and reproducible environments.

Without that isolation the whole system becomes fragile very quickly. Once each task had its own environment, the rest of the workflow started falling into place.

Issues are the natural unit of work

One design choice that worked particularly well was treating issues as the unit of work. Instead of inventing a new job format or orchestration layer, the system simply watches the issue tracker. When a new issue appears, the agent can pick it up, perform the work, and attach artifacts or updates directly to that issue.

That keeps everything visible in the place engineers already spend their time.

The issue becomes the timeline of the task: the original request, the agent’s artifacts, review comments, revisions, and the final result. You don’t need a separate interface because the workflow already exists.

And this is where Symphony’s design choice starts to make a lot more sense.

Why the spec-first approach makes sense

Seen through that lens, Symphony’s spec-first distribution starts to make more sense.

The model calls themselves are relatively straightforward. The complexity comes from integrating agents into real engineering workflows - reviews, artifacts, issue tracking, and infrastructure. Those details will look different for every team.

Providing a spec instead of a single canonical implementation lets the workflow stay consistent while the infrastructure varies. Teams can build their own runtime, integrate with their own systems, and still follow the same behavioral contract.

In other words, the interesting part isn’t the code they shipped.

It’s the pattern they’re trying to standardize.

Backlinks

No backlinks yet.